And UX benchmarking can help

Note: this article was originally published in UX Collective on Medium.com.

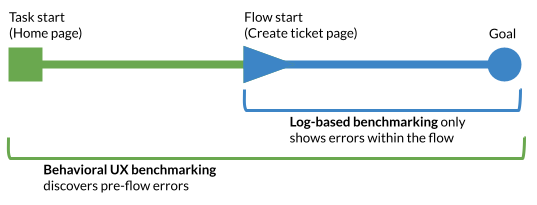

tl;dr —With log-based analytics alone, we can’t see users that want to start a task but cannot find it. This leads us to overcount success rates. With behavioral UX benchmarking, we understand a user’s intent so we know how many users fail to start a task flow due to pre-flow usability errors. This navigation data is crucial to creating a usable product.

Every product team wants to improve their user experience, and they must do some form of UX measurement to make sure that improvement is happening. There are many ways to measure the improvement (or degradation) of a product’s user experience over time, just to name a few:

- Change in log-based metrics (flow completion rate, feature discovery rate, etc.)

- Change is survey-based UX metrics (SUS, UMUX-lite, etc.)

- Change is behavioral UX metrics (task completions, time-on-task, etc.)

This article will focus on one thing: the unique data type we gather from behavioral UX benchmarking and why it’s valuable for improving your product.

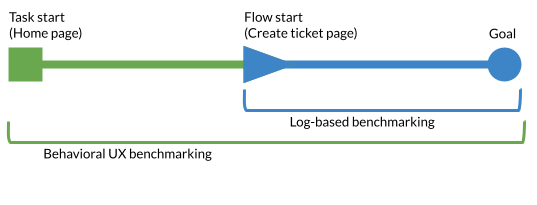

Behavioral UX benchmarking vs. Log-based Benchmarking

Behavioral UX benchmarking is a method that involves giving your sample users a set of tasks within a moderated or unmoderated study, and then measuring how successfully and quickly they complete these tasks (see here if you’re not familiar). This can be combined with survey data (see the SUM method). Log-based benchmarking typically does not involve survey data and uses telemetry data from user behaviors in the product or app.

Let’s put this into an example: we want to know how easy it is to create a support request, so we measure UX metrics on that task.

For log-based benchmarking, users naturally arrive at company website, and then enter the flow to create a support request. Once users begin the task flow, we measure (through analytics/telemetry) how many users abandon or complete the task and how long it takes them. For behavioral UX benchmarking, we invite a set of random users who use the company website to complete a series of tasks. They read the task instructions (“create a support ticket…”), and then attempt the task from the home page. We measure how many users abandon or complete the task and how long it takes them.

Log-based benchmarking has many advantages (depending on your product): it’s easy to implement if your product has telemetry, it gathers highly naturalistic data, and it quickly yields large sample sizes. However, behavioral UX benchmarking has one unique advantage that comes from our explicit information on user intent: we know what all users’ goals are from the beginning of their interaction with our product (create a support ticket). This information allows us to truly understand our product’s usability in a way log-based benchmarking cannot show.

User intent shows us real navigation performance

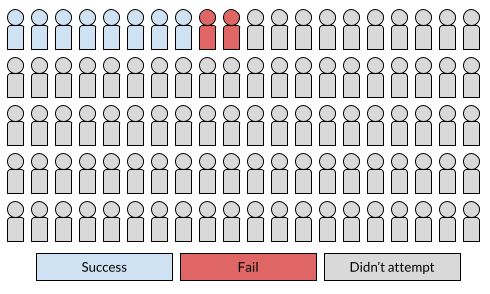

For the sake of example, let’s say we sample 100 users’ log data to understand how many can create a support ticket. Of the 100 that enter the website, 10 enter the support ticket creation flow. Of that 10 users, 8 succeed in completing the flow. This means that our success rate is 80%. This seems pretty good!

What’s wrong with this picture? We have no data on our users’ intents. The thing this picture isn’t showing is that perhaps 20 users intended to create a support ticket. This means that ten users failed due to poor site navigation, but our log data couldn’t track their intent. Our success rate for creating a support ticket would actually be 40%, with 50% of intending users failing due to navigation issues. This doesn’t seem quite as good!

With log data alone, we could never have discovered those who failed due to navigational issues.

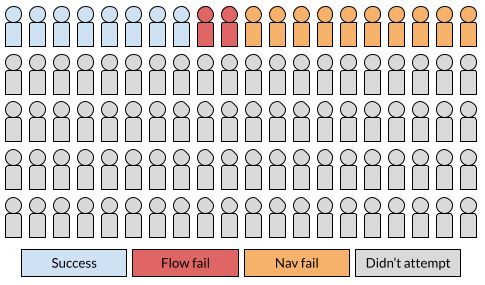

What if we had conducted behavioral UX benchmarking to measure this task? Let’s say we sample 100 users to attempt the task. Of the 100 users that attempt the task, 40 succeed, 10 enter the task and fail because of usability issues in the flow, and 50 fail to find where the flow begins.

These ratios are the same as our log-based benchmarking data (40% success rate), but show us the real problem: our company website suffers from poor navigation to our key flow. If we went by our log data alone, the sites navigation issues would be hidden from our view as a product team. Behavioral UX benchmarking highlights the largest usability problem (navigation), because we know our users’ intents.

From example to big picture: the value of knowing user intent

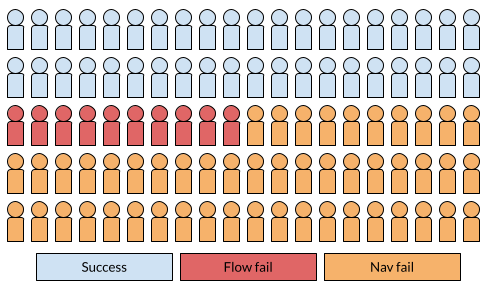

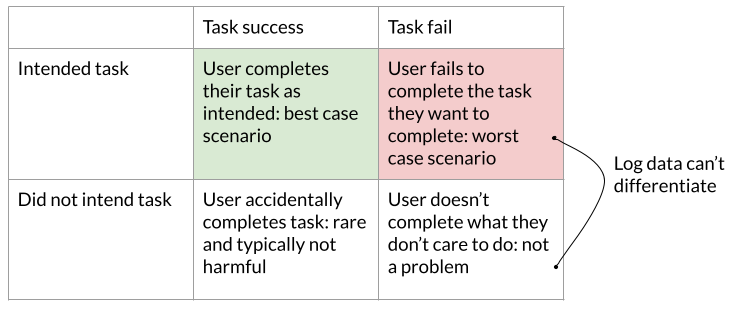

When we don’t what our users intend to do, we can’t appropriately bucket our users’ behaviors for analysis. In fact, we end up bucketing some of the the most critical group and the least critical group into the same classification.

We are almost sure to classify some users that want to do the task but can’t find it as users that simply don’t want to do the task. This hides that our product (a) has a worse overall success rate than it does in our behavioral UX measurement and (b) specifically has pre-flow navigation problems.

So what do we do about it?

Log-based benchmarking is still valuable for all the reasons listed earlier. If your product already has telemetry, it can be a relatively cheap, one-time cost to create dashboards that measure the in-flow success rates at a high sample volume. Use this approach to continuously gather behavioral data and highlight issues in certain flow steps quantitatively. However, we miss the bigger picture when we only look at log-based data.

Your team should also go one step further, knowing that log-based benchmarking will not show you pre-flow navigation problems in your interface (or at least make them seem less bad than they actually are). Behavioral UX benchmarking is the only way to understand your product’s navigation at scale. If time is tight, you could conduct a qualitative usability test (or a first click test, if time is even tighter) to find some navigation issues. However, this will miss some potential issues in navigation and likely won’t allow you to properly prioritize which navigation fixes are most critical.

Conducting behavioral UX benchmarking at some cadence will improve your product’s navigation. Improving navigation is likely to increase purchases, order value, or company revenue overall, so there is a clear business case to invest resources in behavioral UX benchmarking.

Thanks to Thomas Stokes for his help visualizing my thoughts on the task flow.