TL;DR: No researcher or stakeholder has crystal clear acuity of how research execution leads directly to business outcomes. That is why we instead rely on a clear acuity of research rigor, something we can view in the moment of execution, to align our decision-making contexts to a useful reality. The disconnect between execution and outcomes leads non-researchers to devalue execution quality, but this is a mistake because execution quality is a fundamental requirement of good outcome quality.

Businesses hire researchers for outcomes, judge them on influence, and completely ignore the execution. This is the execution-outcome disconnect, and it is the root of the UX research field’s current existential challenge.

To improve outcome impact, researchers need two core elements, rigorous research execution (that gives the ability to align our understanding to reality) and persuasive influence (to cause effective action)1. Each of these are necessary, but individually insufficient, for a researcher to do what the business hired them to do.

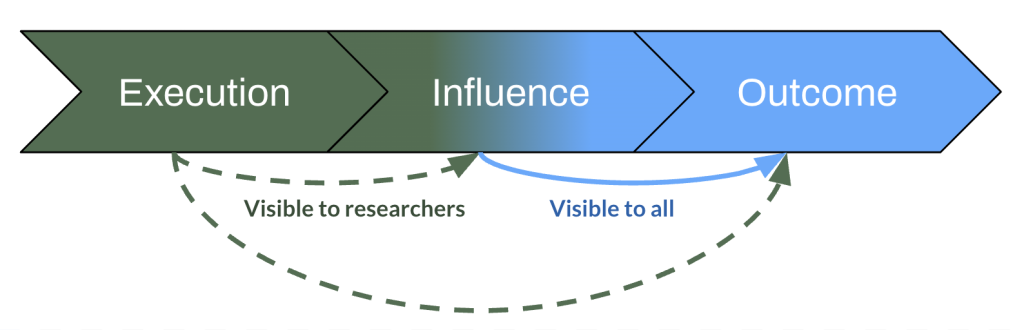

The problem research faces is that only one of these elements is clearly visible to leadership. Influence naturally becomes visible through citations, roadmaps, and subsequent stakeholder actions. Execution is hidden because it’s not obviously relevant to stakeholders’ goals, seen as arcane and time-consuming. This stakeholder perspective puzzles many UX researchers, and let me break down why.

The execution-outcome disconnect

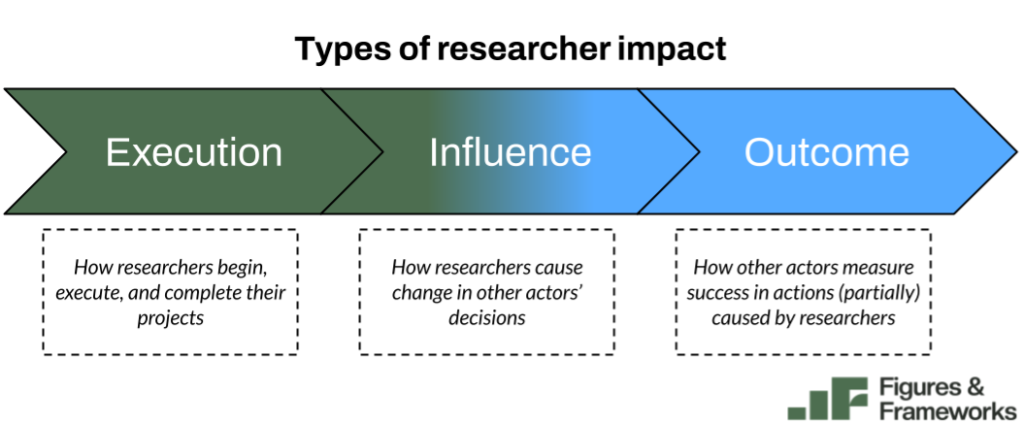

Research impact has three components in my researcher-centric model:

- Execution impact is how researchers do the research itself.

- Influence impact is how researchers cause change in other actors’ (stakeholders’) decisions.

- Outcome impact is how stakeholders measure their success in the organization (that is at least partially caused by researchers’ influence).

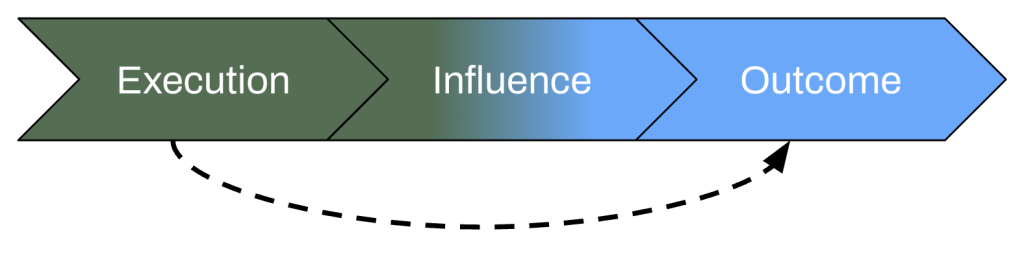

As researchers, we see the connection between all three. They are part of a natural system that follows a logical, temporal process of research1.

Execution impact sets the foundation for research work: it’s how we theoretically ensure our findings are able to better align stakeholder decision-making frames to a useful reality. This is the definition of rigor. To make good decisions, we need to know what each choice may do to change the probability of desired outcomes. The connection is theoretical, but it’s the core premise of why research has any value at all.

This theory is known abstractly by stakeholders, but they are not forced to consider the concrete implications of it. This is the job of the researcher: defending that connection to reality. The researcher is the steward of validity. It’s this specialization of time and skill that makes researchers unique from product managers, designers, engineers, etc. Stakeholders do not have the bandwidth, nor the need, to understand rigor and execution like researchers do.

Influence impact is how the signal from (hopefully rigorous) execution is amplified. It’s the distribution mechanism that connects execution to outcomes, the bandwidth of transferring understanding from execution to stakeholders who make choices based on the insights.

This is certainly more visible to stakeholders than execution. It’s the link between the insights and the outcomes that the stakeholder owns. Most (good) researchers hide quite a bit of rigor in this link because it’s not relevant to stakeholders. While good for influence quality and bandwidth, tucking details of rigor in an appendix has the side effect of keeping stakeholders less familiar with the overall research process. I’m not advocating to change this, it simply defines part of the struggle of the research role.

Outcome impact is what the business cares about most (or at all). As researchers we know that we need to make the business succeed to keep our job in existence or make the big picture, shared organizational goal successful. We don’t control outcome impact, but what we do is ultimately in service of it2.

Stakeholders sometimes see the connection between a researcher’s influence and their decisions. Much of this is determined by how tenacious a researcher is when claiming their influence impact: it’s an effortful task. Still, stakeholders can be driven (with good intent) to ignore the way they were influenced by research because of hindsight bias or simply because they forgot amidst their busy workload. They can also be driven (with ill intent) because of ego or internal politics. Regardless of intent, the long distance between execution quality and outcome quality to stakeholders (the execution-outcome disconnect) shapes UX research as a domain.

Execution + Influence: necessary but not sufficient

Though there are many differences in how execution and influence are perceived by stakeholders, good outcomes require equal importance of both steps that come before.

Execution and influence are necessary but not sufficient on their own to improve outcome impact. A good researcher knows this in their bones. The aim for the right level of rigor (Minimum Viable Rigor or MVR) in a research project is not to check an arbitrary box they learned in school, but to help an organization achieve the outcome impact they were hired to improve. And then, if no one ever understands the findings from the research, they simply can’t be used to make better decisions.

Execution quality is as imperative as influence quality to get good outcome quality.

I make this a point because execution is often given much more scrutiny than influence as to its usefulness. Execution quality is seen as frivolous because stakeholders don’t conceptually link execution quality to their outcome quality. Stakeholders don’t easily link the two because bad research doesn’t stink. The mechanism of failure for research execution is much less obvious than code bugs, shoddy visual design, or even a failure of research influence.

Threats to execution

The execution-outcome disconnect is a structural vulnerability. When a product team is well-resourced and research is functioning as intended, this consequence of the disconnect remains dormant. Researchers keep their research above the Minimum Viable Rigor (MVR) line and stakeholder mental models are effectively aligned with reality when making decisions.

However, this invisibility becomes critical when research consistently crosses the line of MVR. Rigor does not exist for its own sake; it exists to accurately align a stakeholder’s mental model with reality. When the MVR line is crossed, that alignment breaks, and the resulting insights actively jeopardize business outcomes. Because stakeholders cannot “smell” the drop in execution quality: they will continue to act on these compromised insights with full confidence.

Right now, the biggest threat to execution quality is democratization in two flavors: human-generated research democratization and AI-augmented research democratization. In many cases, these are now blended, to the point where purely human democratization doesn’t really exist anymore with the proliferation of AI tooling, but it is the historical root of the disconnect’s main threat.

The main risk of human-generated research democratization is that teams outsource research activities to people with insufficient training to increase the speed of research. Because these other stakeholders are already specialized in other work and likely have a full plate in those duties, suboptimal cuts are made to the quality of the execution. Again, lack of training makes the line of “good enough” (or MVR) too blurry to walk carefully, and it dips below MVR. This problem, which was already increasing in prevalence as budgets tightened, has only accelerated with the onset of AI. Further, AI has begun to threaten MVR even for more experienced researchers.

AI-accelerated research is the newest threat to rigor. Some note this as endemic to AI-tooling through novel ways AI can fail to execute research with rigor (Antin, Holbrook) while others say this is a deeply human problem with a new wrapper that is amplified by the scale of AI (Narula, Segal). The point is that the quality of the AI-augmented research is unreliable and the throughput is much higher than human-generated research alone. Each author has their own diagnosis, and each diagnosis has its own prescription —more to come on this from me.

Outcomes of threatened execution quality

Regardless of the flavor of the threat, the core model is the same. Without a strong reasoning behind execution, there is no guarantee the insights can lead to positive outcomes. We rely on abstract or academic theories of rigor to ensure our execution will lead to good outcomes. No one, not even experienced researchers, has crystal clear acuity of how execution leads directly to outcomes. That is why we instead rely on a clear acuity of rigor, something we can view in the moment of execution, to align our decision-making contexts to a useful reality.

Paying for what you can’t see

The irony of not caring about execution, or a willingness to at least shortcut it through democratization, is that the outcomes that non-research leadership cares about ultimately suffer.

With perfect visibility into the execution-outcome connection, leadership would see how lack of execution quality bites into outcome quality. However, this visibility does not exist by nature, so it’s easy to put research in the hot seat when macro circumstances become challenging for an organization. It’s quite difficult to attribute revenue to research activities. Therefore when money is tight, research is let go.

The irony of reducing research in tight circumstances is that the company is likely to start underperforming. I’m not sure how to balance the budget of engineers and sales with a UX research team in a downturn — there is a reason I’m not a finance or operations leader. I am sure there is a massive cost when an organization cuts funding for something whose revenue generation is less tangible.

Concluding

The rigor of quality research execution has a real, but invisible, impact on outcome quality. This is structural to the discipline of research, not a shortcoming of researchers or their teams. Stakeholders aren’t ignoring rigor out of malice or laziness; rigor’s payoff is abstract or counterfactual, and counterfactuals don’t show up on dashboards. Researchers will continue to feel this pain in organizations and hiring because of the nature of the field.

My post here is not a prescription for how to fix something intrinsic to UX research. I am hoping it can provide clarity into the why behind some of our challenges and struggles. Perhaps it can spark solutions in myself or others later on. At a minimum, it can give some self-acceptance and understanding for all UX researchers out there wondering why we run into the same challenges over and over again.

Next up (in no particular order or timeframe):

- Dig more into the peer of execution: influence. I aim to lay out why it’s necessary like execution quality and how it contributes to “slow” UX research just as much as execution.

- Attempt to discuss how the execution-outcome disconnect relates to business decisions in difficult economic times.

- Propose a model for how AI proliferation interacts with/threatens execution quality, citing existing takes from the field.

A personal note

This connection (or lack of) between execution, influence, and outcomes is a fundamental point I wanted to make a long time ago. I realized writing it out at the time that I wasn’t clear on models for impact (1.0, 1.1, 1.2) or rigor (2.0, 2.1) in UX research. This sent me on a year-long writing/thinking process to sketch out the building blocks to make my point solidly. Here I’ve done so, and that feels resolved… for now 🙂. In writing and rewriting this post, I’ve found about 3 other ways for it to splinter into some more work that I mentioned above. Stay tuned!

Appendix

- Chris Chapman wrote that “rigor” is not the same as method quality. I disagree here. While I agree with every point he made in the article about what is related to quality across research as a discipline, rigor is plainly associated with methods in how we use the word in everyday life. If you said a researcher is “rigorous”, I doubt most would walk away thinking about how persuasive the research is. It’s an uphill battle to reframe that word to mean more than it does right now. The argument here, which I’ve made in different language, is simply that “quality” UX research is more than advanced methods. It also includes also defining good research questions or persuasively influencing stakeholders. Chapman and I are making the same argument but I am politely disagreeing with the label choice. To me, rigor is a component of a formative/composite construct of “research quality” rather than a reflective construct encompassing methodological superiority and persuasion effectiveness. I perhaps even could refine my definition of rigor further to say it’s when environmental variables are effectively uncovered to give the ability to help shape decisions that lead to intended consequences. ↩︎

- Temporality here is not a straightforward line, but more of a looping, iterative process. Still though, it progresses typically in a direction: doing the work, sharing the work, seeing what happens next. ↩︎

- Yuval Noah Harari argues in Sapiens, a corporation is like a modern day religion, a shared fiction for how to coordinate humans at scale. Whether or not we recite our employer’s goals like a creed, it’s the shared fiction we buy into at some level to drive our work. This is the best way I can describe why UX researchers ultimately care about outcome impact. I’m butchering his position here, so I’d suggest reading the book for a deeper dive. ↩︎